Researchers at Samara University have developed a novel approach to building optical transformers—the neural network architectures underpinning modern large AI models capable of analyzing and classifying images and human speech. Unlike conventional digital neural networks that rely on electronic computation, optical transformers process data using streams of photons and specialized optical components.

While optical neural networks already offer significant advantages in speed and energy efficiency—operating without the need for high-power electrical systems—they have historically lagged behind digital counterparts in computational accuracy. However, the Samara team has now demonstrated, through extensive experimentation, that their new method enables optical transformers to surpass classical digital neural networks in precision.

The research was conducted at the Second-Wave Artificial Intelligence Research Center, established at Samara University under Russia’s federal “Artificial Intelligence” project. The findings have been accepted for presentation at ACM SenSys 2026 (ACM Conference on Embedded Networked Sensor Systems)—one of the world’s most prestigious forums in sensor systems and the Internet of Things—taking place in France in May 2026.

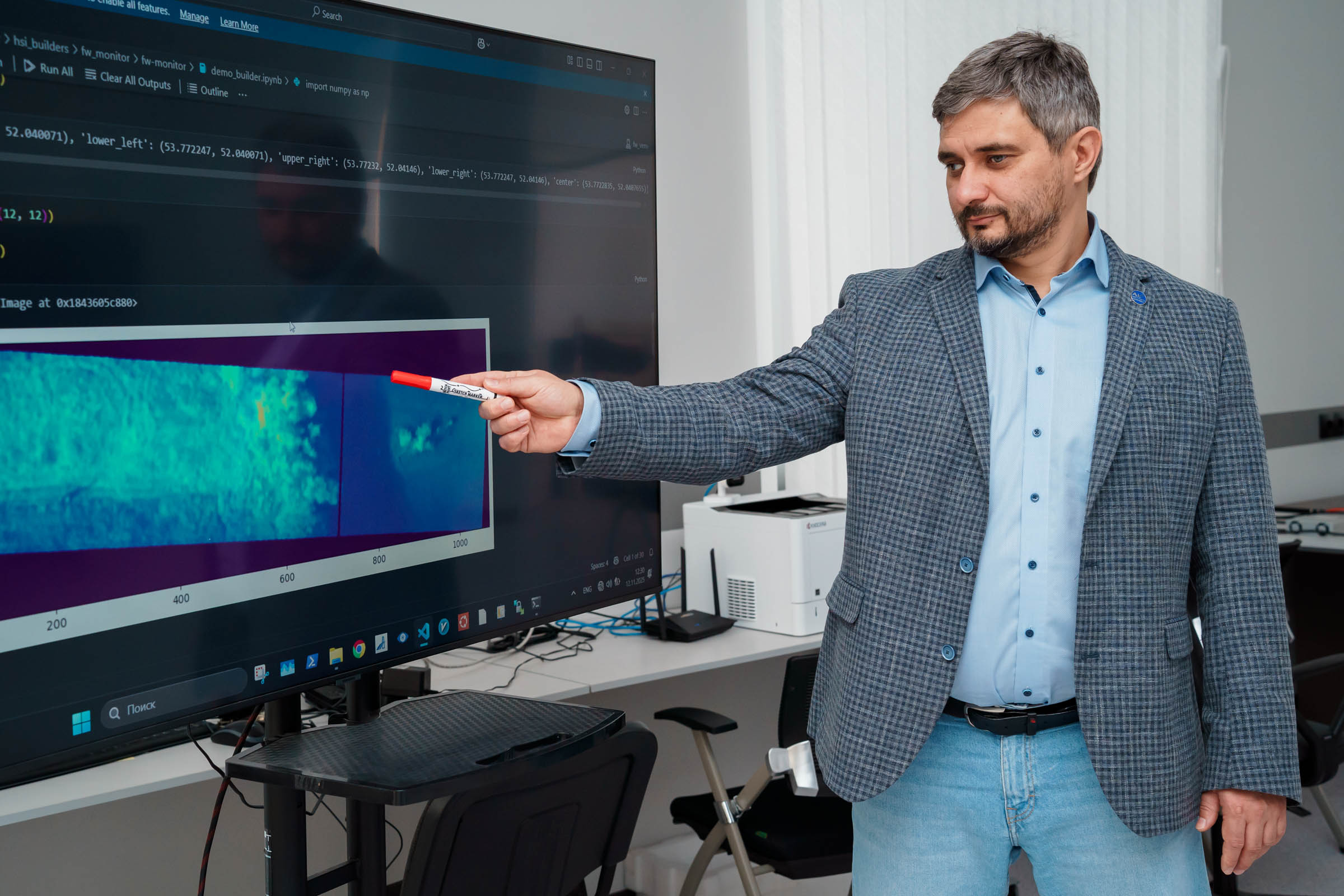

“The global trend in optical AI has been to catch up with digital neural networks in terms of accuracy,” said Professor Artem Nikonorov, Director of the Institute of Artificial Intelligence and Head of the “Intelligent Mobility of Multifunctional Unmanned Aerial Systems” Center at Samara University.

“We are the first to show that not only can optical transformers match digital models—but they can actually outperform them. This achievement is the result of close collaboration between experts in optics and artificial intelligence. While the core optical matrix multiplication scheme we used is well known, we modified it by replacing conventional elements with diffractive optical components wherever possible. More importantly, we rethought how multiplication errors are handled. In optical systems, error typically increases with matrix size—but we integrated a numerical model of optical multiplication directly into the neural network training process. As a result, the network learns to compensate for these errors autonomously, without sacrificing final accuracy.”

According to Nikonorov, the optical multiplication process involves tunable parameters that are optimized during training, enabling the system not just to correct inaccuracies—but to enhance overall precision beyond digital benchmarks.

“It appears we’ve created a kind of generalized, parameterized version of matrix multiplication that adapts to the specific task during transformer training—essentially a ‘personalized’ multiplication tailored to each application,” he explained. “Moreover, by introducing an additional trainable diffractive element into the optical transformer architecture, we achieved further gains in accuracy.”

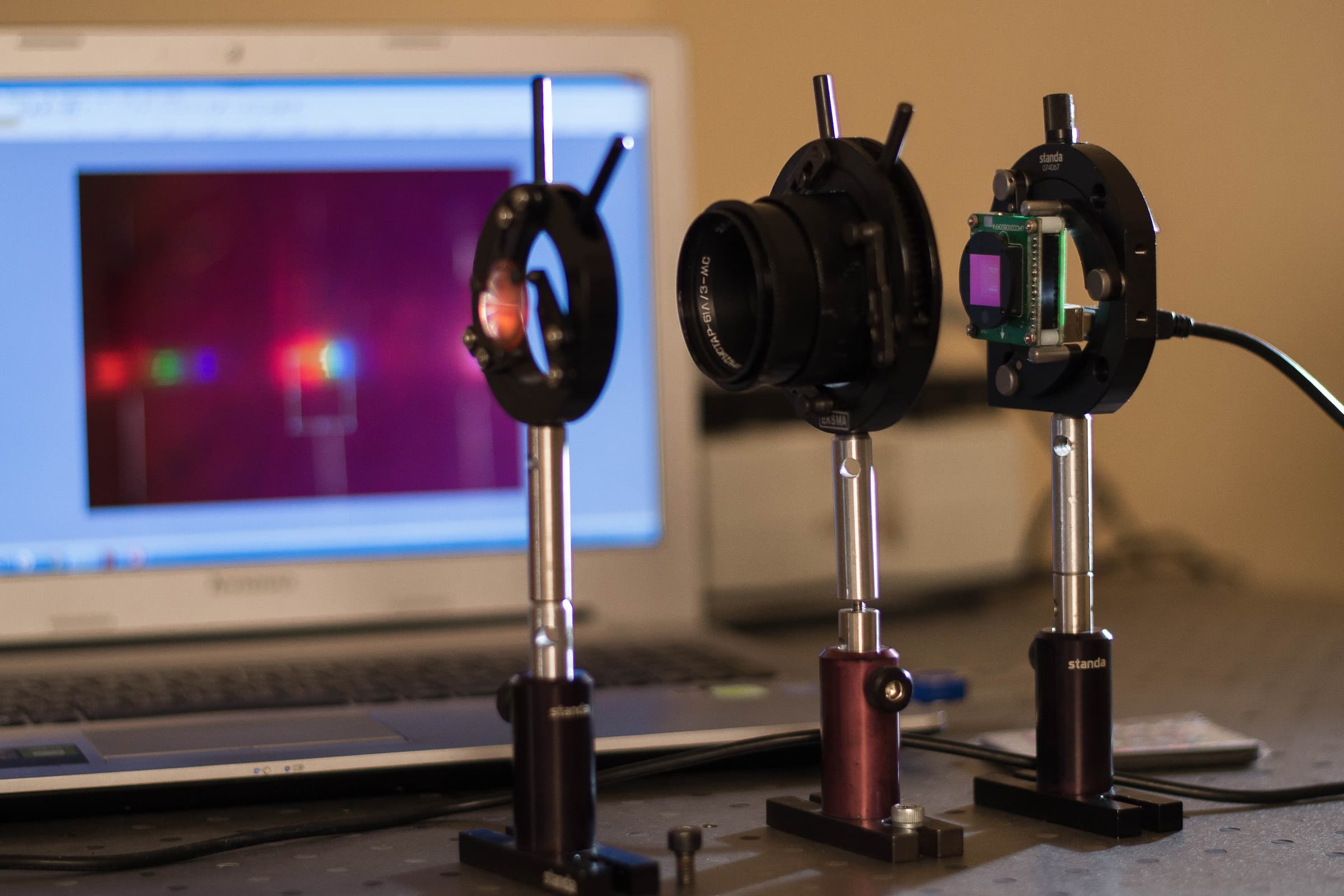

These conclusions were validated through a series of experiments using numerical simulations of the modified optical scheme embedded within standard neural networks. Test cases included compact language models (Tiny LLMs), lightweight computer vision architectures (Tiny ViT), and a credit-scoring neural model.

“Our approach consistently outperformed traditional digital transformers across three domains: text processing, image analysis, and financial risk assessment,” Nikonorov noted. “After adding a dedicated trainable diffractive component responsible for parameterization, we observed an additional accuracy boost. For instance, we reduced the model’s perplexity by 1.5 times—and lower perplexity means better, more coherent, and more accurate text generation.”

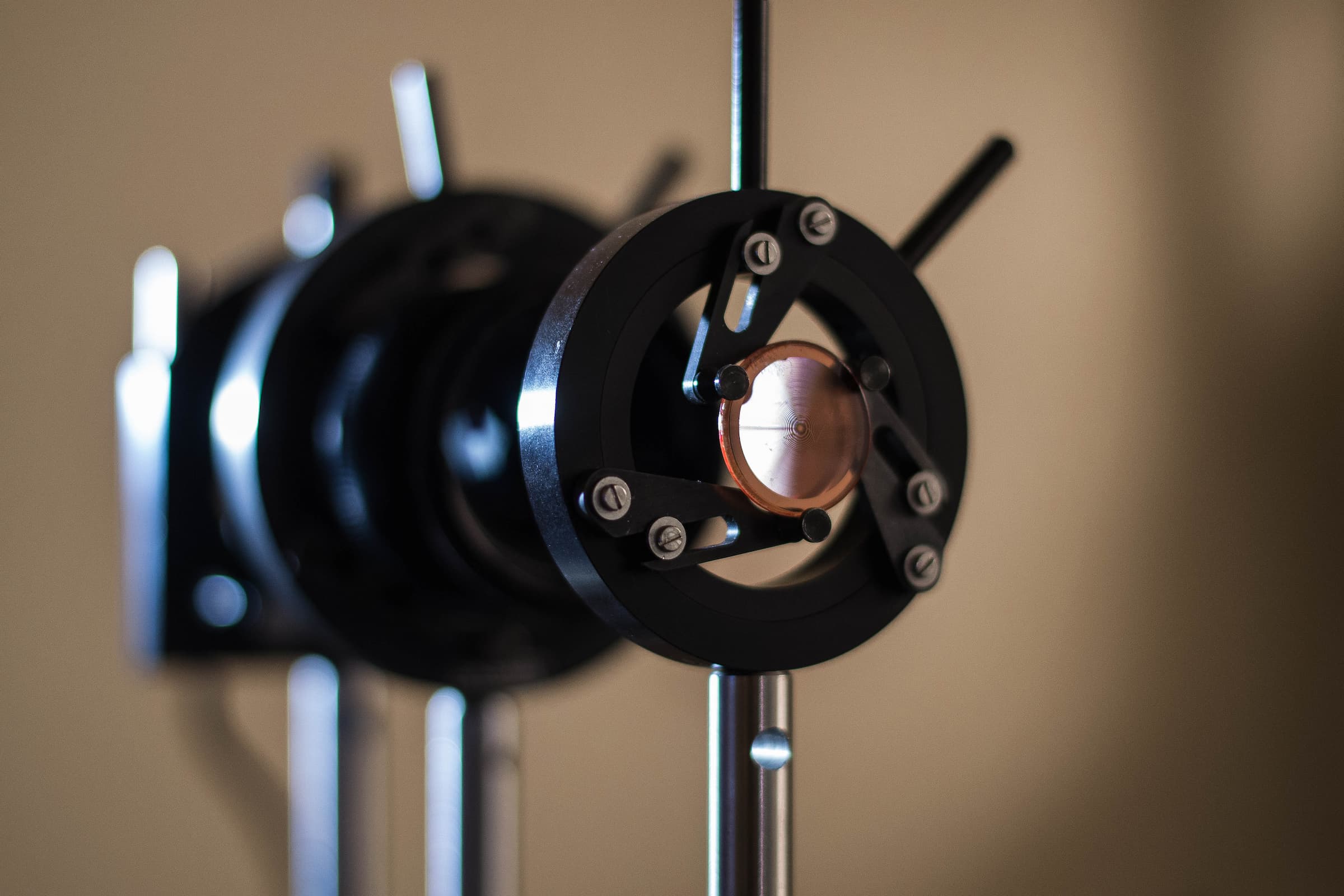

The key innovation—a miniature diffractive optical element just a few millimeters in diameter—was developed at Samara University. Made from polymer photoresist or fused quartz, such components are already used in ultra-compact, lightweight optical systems for nanosatellites. Crucially, this accuracy-enhancing element is fully passive, requiring zero energy consumption—in stark contrast to digital AI, where higher accuracy demands more computations and thus greater power draw.

“The massive energy consumption of GPU clusters is one of the main bottlenecks in AI development today,” Nikonorov emphasized. “Optical computing represents a rising solution to this challenge. The future undoubtedly belongs to optics. Optical neural networks will enable the creation of high-performance, compact, and ultra-energy-efficient AI systems for drones, robotics, autonomous vehicles, and IoT devices.”

The research team’s next step is to develop a hardware prototype of the modified optical transformer—specifically optimized for integration into onboard drone systems.

For reference:

Samara University is a globally recognized leader in diffractive optics and image processing. Its expertise stems from over 40 years of research by the School of Information Optics and Photonics, founded and led by Academician Viktor Soifer, President of Samara University. The university’s first landmark paper demonstrating the viability of diffractive optics in imaging systems was presented in May 2015 at IEEE Conference on Computer Vision and Pattern Recognition (CVPR)—the world’s premier conference in the field.